In recent posts I’ve been focusing on Azure Data Factory. Today I’d like to talk about using a Stored Procedure as a sink or target within Azure Data Factory’s (ADF) copy activity.

Most times when I use copy activity, I’m taking data from a source and doing a straight copy, normally into a table in SQL Server for example. But with using a Stored Procedure I can apply some additional processing or logic or add columns to my dataset.

It’s a bit tricky to do, so let me give you a run through:

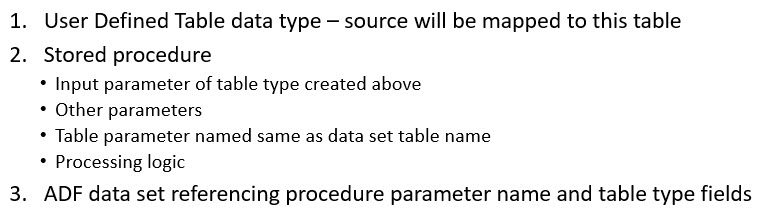

- We need to set up a few things ahead of time to use the Stored Procedure sink. I’ve listed that on the screenshot below.

- Part of the trick to using Store Procedure is we need to define in our target database a user defined table type. This is a user defined data type that has a structure of a table.

- We want this user defined table type to have similar structure to our incoming source data. So, ultimately in a few more steps we’re going to map our source data to this table type.

- Then we need to create our Stored Procedure and we’ll create a parameter in that Stored Procedure using this data type.

- The last piece of the trick here is setting up your target dataset within ADF to use this Stored Procedure.

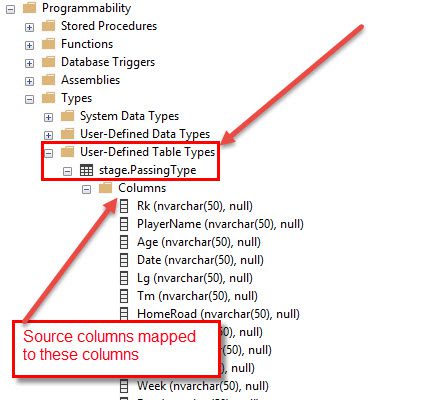

- The screenshot below is an illustration of SQL Server Management Studio. In this case, I’ve created a user defined table data type that I called “stage.PassingType.” Stage is my schema name and Passing Type is the name of the data type.

- You’ll see the columns that I defined here, and in this example, these align very closely to the file data that I’m importing into my database.

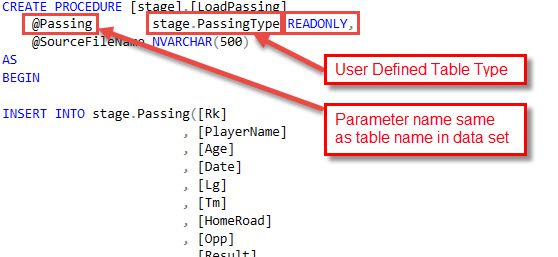

- Once I’ve got that table data type defined, I want to move on and create my Stored Procedure (see below). Inside this Stored Procedure I might have some logic. At a minimum maybe I want to add some metadata from the ADF process to my target table.

- You can see in this Stored Procedure I’m doing a simple insert, but I can call out to some functions or maybe do some data cleansing or get rid of some values that I don’t want. Anything I can do in a Stored Procedure, I can do here.

- The key here is at the top you’ll see I created a parameter using that data type that I created earlier. The parameter name I chose here was Passing. Take note of that parameter, we’ll need that in a second.

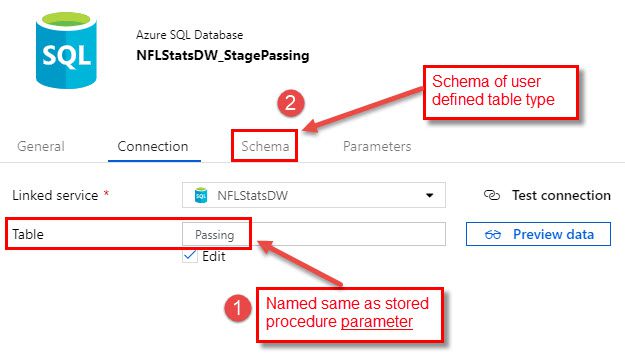

- The next thing I need to do before I get into the copy activity (see below) is configure my target data set in Data Factory.

- What I want to do, and this is a little tricky, is where it says to choose a table, I want to provide the name of the parameter that I used in my Stored Procedure. So, the Stored Procedure has a parameter of a user defined table type. It’s the name of that parameter here that I put into that table name.

- If I hit that Preview Data button, it actually won’t work because I don’t have a table in my database named “Passing”.

- The other key here is when I define the schema, I’m really mapping or including the schema of the user defined table type and not the target table.

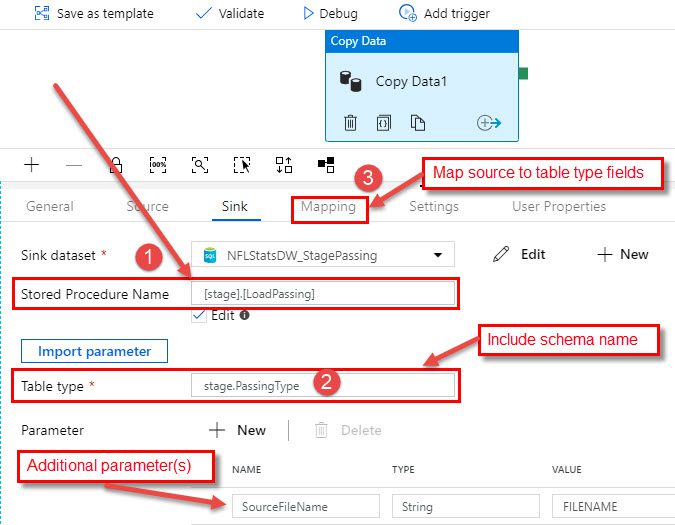

- Once I have that all set up, I’m ready to move on to the copy activity (next screenshot). For the copy activity, I’ll go ahead a choose my Stored Procedure that I defined from my list of Stored Procedures.

-

- I like to use the Import Parameter button. And if this is done right, it should recognize that I have a parameter of the table type.

- In my case I had to add the schema name to my data type there. So again, this is the name of the data type of that parameter, not the name of the parameter. In my case, I had a schema name in front of my data type name, which is what I’ve shown here.

- The final thing is the mapping and here you’re mapping the source (in my case from a file) columns to columns in that user defined table data type.

A little bit tricky but I hope this overview of how to use a Stored Procedure as a sink in Azure Data Factory was helpful. Need further help? Our expert team and solution offerings can help your business with any Azure product or service, including Managed Services offerings. Contact us at 888-8AZURE or [email protected].