In this series, learn how 3Cloud is helping a large local government with user and data security as they modernize operational reporting using Power BI.

3Cloud recently worked with public safety agencies that are a part of a large, combined City-County government, focusing on modernizing systems, while also keeping data secure within and across these agencies. This project helped securely develop and distribute sensitive data and reports while also monitoring and auditing Power BI usage to meet internal, state, and federal data protection requirements.

Challenges

All levels of government struggle to manage data quality, which can often make reporting challenging as end-users question the accuracy and usability of the information. Specific to departments of public safety, there are issues and questions related to security and data auditing as they consider moving to cloud computing.

- Will the agency’s data be stored outside the agency databases?

- What if the agency or department has a policy against having data in the cloud?

- With data spanning dozens of departments and tens of thousands of users, what if the data is too large to move or fit in a reporting tool?

- Will the data in the reports reflect real-time updates that occur in the database?

These questions are central concerns for incorporating a reporting solution into an agency. The potential for moving to a tool like Power BI means giving end-users the power to create and share their own reports and easily filter and drill down into information to get a better understanding about the key pieces of information that can drive decision-making. The alternative is emailing PDFs or spreadsheets, which are almost immediately out of date and difficult to track.

The good news is that Power BI is able to comply with all of the questions asked above. What follows is a technical walk-through of the steps 3Cloud took in this project. If you are interested in more information about how Power BI can work for your organization, check out some of our resources.

Solutions

Concerns about Power BI data storage and the cloud are largely answered by one question: How does Power BI connect to the data? Power BI has two primary options for connecting to data: DirectQuery and Import (and a third hybrid option called “composite model”, but we will set that aside for now). Of course, there are many nuances to how those connection methods work, but we’re going to just focus on the ones relevant to the data storage concerns of government agencies. In brief, here’s how they both work:

Import: When the data source is set to “Import,” the data will be imported and stored in the .pbix file. Because the data is stored in the file, when that file is published to the Power BI Service (i.e., the cloud), the data follows.

DirectQuery: Setting the data source to “DirectQuery” only creates the connection between the Power BI data model and the data in the source. It does not store the data in the file. Based on those simple definitions, it looks like DirectQuery is the way to go for mitigating data in the cloud. But there are a few questions that need answering before switching all connections to DirectQuery.

- What are the pros and cons of DirectQuery?

- How does the connection method influence report development process?

- What about report performance?

- Can setting the connections to DirectQuery really avoid sending all data to the cloud?

Let’s start with the last one: Does DirectQuery prevent data from being stored in the cloud? For the most part, yes. When DirectQuery is used to connect to the data source, only the connection information is stored in the file, so when published to the cloud the data is not contained in the file. While the data itself is not published to the cloud, the metadata is included in the file. Things like table names, schemas, and measure names are stored in the file. The metadata is a necessary part of the connection details, and even DirectQuery cannot minimize that part of the data being sent to the cloud.

Now that we have uncovered that the Power BI Service can accommodate an on-premises data requirement, there are a couple of other key considerations. Unlike an Imported data set, which issues queries against a cached dataset in-memory, DirectQuery sends a query back to the data source each time a visual is refreshed, filtered, etc. As one might expect, an imported dataset can perform much faster than DirectQuery. However, DirectQuery performance is highly dependent on network speed and data source configurations, so there are plenty of options for tweaking to optimize performance.

By transitioning to DirectQuery in order to manage data storage requirements, it’s important to know that certain features of the data modeling capabilities in Power Query are limited. Report development teams will need to be aware of how their development practice – specifically around data modeling – will change with DirectQuery.

Now let’s dig into the details of how to implement DirectQuery.

Implementation

From our experience working with government agencies, we know that oftentimes they have policies or preferences around storing data in the cloud. Using the cloud-based Power BI Service doesn’t have to conflict with an on-premises requirement if you utilize DirectQuery for your data sources. Let’s look at how to go about implementing that DirectQuery for government agency data reporting needs.

Configure your data source

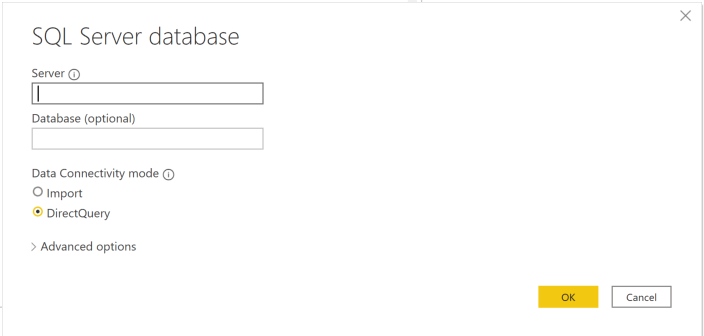

Setting up DirectQuery starts during the initial connection set up in Power BI Desktop. As mentioned above, when selecting “Get Data” and choosing a database option, there are two different Data Connectivity modes.

After the data is connected via DirectQuery, there will be the opportunity to model and transform the data in the Power Query editor. Features in the Power Query editor are limited with DirectQuery and care is required or there is the potential to unintentionally undo the DirectQuery setup. When executing many of the transformations a warning will display: “This step results in a query that is not supported in DirectQuery mode” with the option to switch the tables to import mode. Stop right there!

Switching to import mode will negate the efforts of not storing data in the cloud. Instead, it’s best to limit which transformations are performed in Power Query and make the transformations back into the source system.

Checking file size

Once the data is modeled with DirectQuery (and the rest of the report is built), the file size will be substantially smaller when only storing the metadata instead of importing the data. A small file size is a good indicator that DirectQuery is set up successfully.

Publishing to the Power BI Service

Now comes the time to publish the report – but not the data – to the cloud service. From Power BI Desktop, developers can publish the reports to a Workspace just like they would for any other report. However, when viewing the report in the Power BI Service, a notice will display across the top.

For the data to live on-premises and be available to display through DirectQuery in the Power BI Service, the data needs to go through a Gateway. A Gateway is a piece of software installed on a server that connects the on-premises data source with the cloud service. After the gateway is configured with credentials for the data source and Power BI account, published reports can directly query on-premises data from the cloud.

Implementation Results

Once reports with DirectQuery configured are published to the Power BI Service, a few key results are available.

- Near Real-Time Data is available. One advantage of the DirectQuery data source is that the report data is kept in sync with your data source. DirectQuery issues a query against the data source and returns the most recent version of your data source. Imported data sets require configured scheduled refreshes for the report data to reflect the data source. With the Gateway up and running, a DirectQuery report always has the most up to date data.

- Data size has (nearly) no limits. Rather than facing the size limits imposed for data imported into a Power BI model, DirectQuery provides access to nearly unlimited data volumes. The space required to store the dataset metadata is minimal and removes the storage space ceiling in the Power BI Service.

- Data isn’t stored in the cloud. This, of course, is an important requirement for government agencies. The data remains in the on-premises or existing secure data infrastructure.

Summary

A government agency that is moving to Power BI from a legacy system, or trying to increase adoption of the platform, must face the handle the questions of how to connect and store data. Power BI offers great flexibility for agencies that are cloud adverse, cloud-friendly, or somewhere in between. It can scale to accommodate massive datasets but is also nimble enough for departmental data marts.

Learn More

Follow these links to learn more about how 3Cloud has helped other municipalities – including evolving education strategies, fostering traffic safety, and ensuring water quality. Or take a moment to check out our Modern Data Platform and Modern BI pages to discover how the right advanced technology can transform outcomes.