From March 23rd through the 25th, a small group of us here participated in Microsoft’s AI: Look, Listen, Innovate! Solution Hack contest. This hackathon was centered around solving interesting problems using AI models that are deployed on the edge. So, how’d it go?

In large urban settings, the monitoring of streets is a cumbersome task for municipalities to manage. This is important information to have as short- and long-term decisions are made at the street block level including maintenance scheduling, transportation planning, and understanding crowd flow. Collection of data could be performed by using traditional security cameras and analyzing that footage on a server somewhere, but this can raise questions around public privacy as to what information is being captured and how it is being used.

Our project was centered around building a solution for smart cities to collect information about busy city streets without infringing on public privacy. That is analyzing data on the edge and only relaying relevant, non-private information back to the city.

About Edge Computing

Edge computing, put simply, is the usage of devices to collect and process data, rather than fully relying on powerful servers to do all the heavy lifting. Microsoft has coined the term “Intelligent Edge” to describe smart devices that interface with the cloud with the biggest benefit being real-time insights and extremely responsive processing of data.

Azure Percept (Previously Project Santa Cruz)

For this hackathon, everyone used the Azure Percept dev kit, which comes with a system on a chip (like a more powerful Raspberry Pi), an RGB camera, a microphone array, a wireless antenna, and all of the cables and accessories needed to get started. Plus, there’s a deployment template that can quickly get you up and running and get your device connected to your Azure environment.

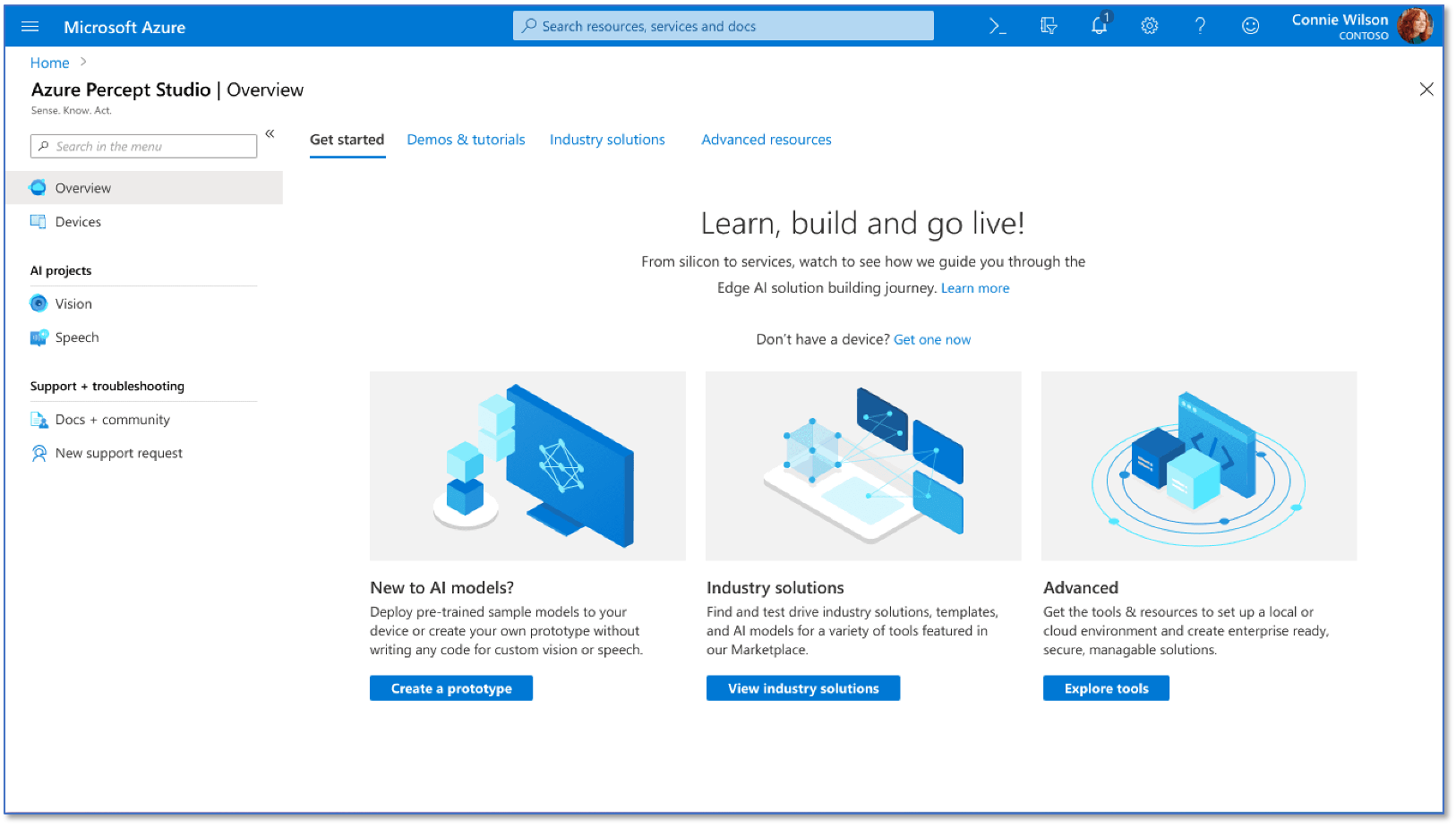

In addition to this device, Azure Percept has a Studio component that makes it easy to manage devices and train and deploy AI models to on the edge. This dev kit, complete with the Percept Studio, makes it easy to explore new AI use cases on the edge without the need for complex hardware prototyping or convoluted device management.

Our Smart City Solution

Understanding multi-model transportation in cities allows for better planning, more efficient use of resources, and provides better insights into public behavior. The use of computer vision-based monitoring of streets will provide deeper insights into pedestrian density, parking scarcity, and road conditions.

Our edge-based approach ensures the privacy of public data while providing real-time information to municipalities across their city. This AI-centric monitoring solution will be able to remotely track multiple factors on a given city street.

Our edge-based approach ensures the privacy of public data while providing real-time information to municipalities across their city. This AI-centric monitoring solution will be able to remotely track multiple factors on a given city street.

So, How’d We Do?

In three days, plus a bit of prep work ahead of time, we were able to deploy a working edge-based solution for street monitoring. This included the deployment of our computer vision models on the device and the connection of the device to the cloud for capturing that street statistics data.

Our prototype solution was able to capture object information from the camera devices and relay that information through Azure IoT Hub and into our data lake, where it was then picked up and can be queried using Azure Synapse or visualized in Power BI. Thus, AI models deployed to the edge allow decision makers to have visibility into the metrics of interest on a city street without having visibility into the actual camera feed, alleviating any privacy concerns in the process.

Interested in Exploring AI on the Edge?

If your organization is interested in building state-of-the-art AI models and deploying them in remote scenarios, contact us today! 3Cloud’s team of experts in AI, IoT, and data platform services in the Azure cloud makes us the perfect partner for exploring how edge computing can transform your business.