I want to talk about Dataflow and how we can use that to turn Power BI into an ETL tool.

Going back to when we first began using Power BI, we used Power Query to query our data sources, perform translations and transformations on that data and created some data sets. In this case, those data sets were not very re-usable as they were only available to that Power BI report.

Then Power BI introduced the capability to share data sets to other Power BI reports, so if I created a data set with a lot of transformations and I published that, other Power BI users could build reports on that data set.

Now with Dataflow we can create data sets using Power BI that are accessible to several tools including Power BI, so things using like Databricks, for instance, we can connect to a Dataflow.

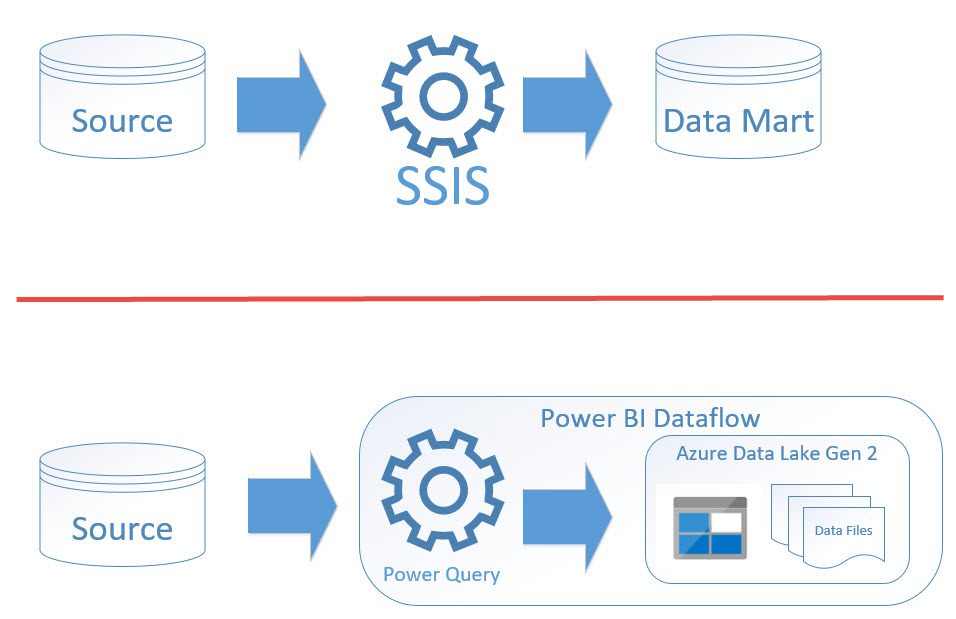

What does this all have to do with ETL? Let me explain. In this first screenshot I have a simple diagram of two Dataflows.

The top shows a typical pattern we use today where we would use SSIS for pulling data out of a source system, perform some transformations and then load it into a Data Mart in a relational database schema. The bottom one shows that with using Power BI Dataflow, we’re using Power Query just like we do today with Power BI; we have the same tools and transformations, but the output of that now is not a Power BI dataset, but are files that are stored in Azure Data Lake Gen 2.

With that data stored in Data Lake, we can use other tools to consume that data. We are not limited to just Power BI. We can also do things such as point Databricks to it or we can use that data as a source for a machine learning model using Azure Machine Learning.

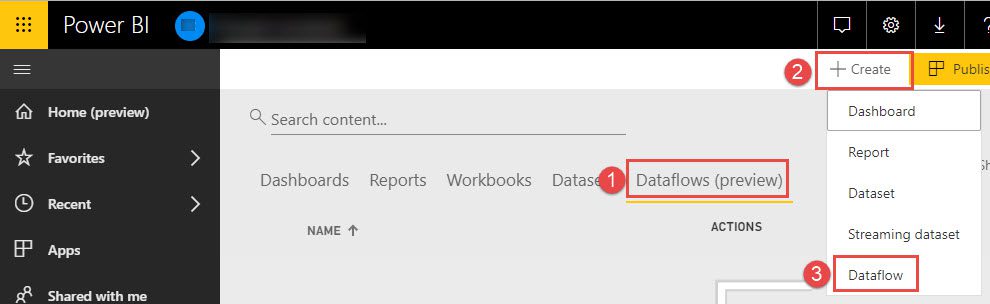

Dataflows are created through the Power BI Service. The screenshot below shows you how to do that.

- If you go out to your workspace, you’ll see the Dataflows marked as a preview. When I select Dataflows, I can see what Dataflows I have and then I can create them by clicking in the upper right and choosing Dataflows.

- This will open an Editor in your web browser using Power Query, so you’ll connect to your data sources and you can perform all the same transformations that you can do in the Power BI Desktop, but it’s all done through your browser.

- After you’ve created your Dataflow (or the definition of it), you’ll refresh the data in that flow (just as you do in Power BI Desktop) and that will populate your Dataflow with data.

- Your Dataflow is now accessible as a data source for Power BI or other tools.

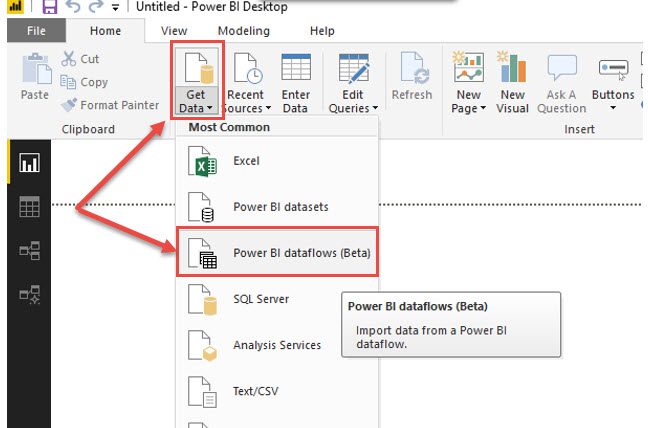

This last screenshot shows a recent grab from Power BI in that the Power BI Dataflows are available as a data set in Power BI. You can use Power BI to connect to Dataflows, but as I mentioned, the data is stored in Data Lakes, so you can use other services to connect to that data as well.

I hope this quick introduction to Power BI Dataflow and how we can treat it as an ETL tool was helpful.

Need further help? Our expert team and solution offerings can help your business with any Azure product or service, including Managed Services offerings. Contact us at 888-8AZURE or [email protected].