This is a session recap of information presented by Kevin Webb during 3Cloud’s Envision Summit.

In this session, we cover the components of Microsoft Fabric and how they can elevate your analytics and revolutionize your data workflow. We walk through the journey from data acquisition to data consumption and explore the details and use cases for Fabric. We’ll also explore the creation of a Lakehouse, the seamless ingestion and transformation of data using a medallion architecture, and the seamless consumption of insights with the powerful capabilities of Power BI.

What is microsoft Fabric?

Fabric is a unified and simplified platform for managing data and the various analytical workloads across the organization. It provides a single SaaS platform to increase time to insight and remove blockers for developers at all levels of the organization. The primary capabilities of Fabric include:

- Providing a single source of truth for your organizational data.

- Increasing data accessibility and democratization.

- Improving security, compliance, and governance across different analytics workloads and processes.

- Reducing management and integration overhead by leveraging a shared platform.

- Accelerating time to value with open and flexible analytics solutions.

What is OneLake?

Fabric streamlines six existing Microsoft technologies into a single SaaS environment leveraging a single data lake called OneLake. OneLake encompasses:

- Data Factory

- Synapse Data Engineering

- Synapse Data Science

- Synapse Data Warehousing

- Synapse Real Time Analytics

- Power BI

- Data Activator

OneLake is a single, unified data lake for the entire organization, removing data silos. It enables all Fabric workloads and users to use the data from a single copy, removing the need to duplicate data. It provides full and open access using standard APIs and file formats. In OneLake, data is automatically indexed for discovery, governance, and compliance.

Understanding Workspaces in OneLake

OneLake enables distributed ownership through the use of workspaces. Workspaces can have their own administrator, access settings, geographical region, and capacity. This allows different users/business groups to contribute to OneLake, while maintaining control of their data.

What are Fabric Data Items?

All data is stored in Fabric data items, such as: Data Warehouses, Lakehouses, KQL Databases, Power BI, Datasets or SQL Endpoints. Data is stored in a folder and files format, just like you experience in OneDrive. No proprietary data storage formats are used. All data is stored in open file formats; tabular data uses Delta Lake format.

Shortcuts

Shortcuts are links that point from one data location to another, allowing for using virtualized data across items or workspaces without duplication or changing the ownership.

- Key Point: Shortcuts can include data outside of OneLake and even outside of Azure.

Fabric Data Platform Journey

Using a fictional project case study, Kevin guided viewers through a data platform journey using Fabric. He presented a scenario in which a healthcare organization needed to assess the quality of care of their patients. One way to accomplish this is by tracking the rate of routine procedures that the patient population received, also known as a Quality Measure. The healthcare organization received data feeds from multiple external organizations reporting on lab tests and results for the patient population. The data arrived in different formats with varying sets of attributes.

Goals

- Ingest the source data to a raw landing area.

- Transform the source data to a common model and relate it to existing organizational master data.

- Enrich the transformed data to a star schema enabling quality measure calculation and reporting.

- Publish reports tracking actual quality measures against goals.

Teams

Several teams are needed to coordinate activities in the Fabric Data Platform Journey. In this scenario, we include three teams.

Data Engineering Team

- Responsible for data ingestion and transformation

- Knowledge of data lakes, data modeling, Python, and Spark

- Works in Notebooks

Master Data Team

- Responsible for maintaining master data

- Knowledge of relational databases, data modeling, and SQL

- Works in SQL

Reporting and Analytics Team

- Responsible for report and visualization

- Knowledge of visualization design, DAX, M, and data modeling

- Works in Power BI

Build Plan

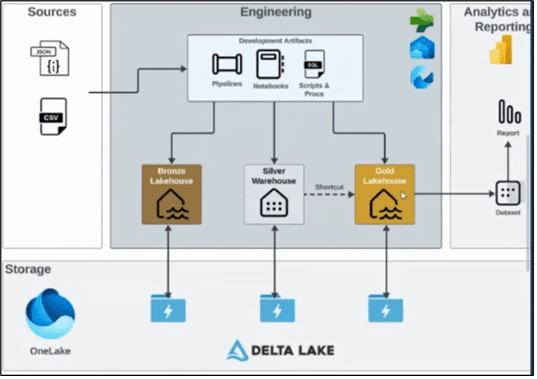

An architecture diagram illustrates the build plan. A live demo took us through the following steps.

- Initialize

- Create Workspace

- Create Lakehouse and Warehouse items

- Build Bronze

- Upload files to Bronze Lakehouse Files location

- Load file data to Bronze Lakehouse Tables

- Build Silver

- Load master data to Silver Warehouse

- Create shortcut to Bronze Lakehouse

- Transform Bronze data to Silver data

- Build Gold

- Load Dimension Tables in the Gold Lakehouse

- Load Fact Tables in the Gold Lakehouse

- Create Gold reporting model

- Build Report

- Build Quality Measure report from Gold model

Watch the Fabric Data Platform demo to see the step-by-step process for building the Fabric Data Platform. Ready to learn more? Get started with 3Cloud today.